xXx_GR00T_xXx

Gaming with Robot Foundation Models

Gaming as an adult is just not what it used to be: You spend your days at work, and when you finally make it home, you’re often too tired to really have a quality time gaming. As for most things in life, the obvious solution is delegation and automation.

Robot Foundation Models (RFMs) are one of the most promising current AI innovations, allowing robots to take over more and more human tasks and chores. So we did the only sensible thing - we taught an RFM to take over the exhausting part of your day so you can fully focus on what really matters.

That’s right, we trained NVIDIA’s GR00T to play Counter-Strike, so you can have more time to immerse yourself in the exciting world of work! Wait, what did you think we were going to do?

This blog post will give a brief introduction to RFMs, how they can be adapted to games and summarize our journey so far of teaching GR00T how to play Counter-Strike.

What are Robot Foundation Models?

Robot Foundation Models (RFMs) are the latest AI innovation in robotics and might just be the next breakthrough for physical AI. They promise to end the era of task-specific, robot-specific models by applying the idea of foundation models to robotics. The following sections give an introduction to RFMs and NVIDIA Isaac GR00T N1.5 specifically. If you’re an RFM pro already, feel free to skip these sections.

The concept of foundation models has been well understood in Computer Vision and Natural Language Processing for quite some time now. Foundation models are pre-trained on a broad dataset and can easily be adapted to a wide range of downstream tasks. Large Language Models (LLMs) are a recent example of foundation models that have dominated Natural Language Processing. Their world knowledge and general capabilities make it easy to adapt them to a wide range of tasks through prompting or fine-tuning.

About two years ago, the first concepts of RFMs started to surface from the scientific community. In vastly over-simplified terms, they can be summarized as a combination of these two simple ideas:

- What if we just took an LLM with vision capabilities, gave it some instructions, a camera image of the robot and its surroundings, and fine-tune it to generate control signals for the robot?

- Let’s take all the data, from ALL the robots and ALL the tasks and just train one big model with it and see if it can outperform specialized robotics models.

Despite its simplicity, this approach turned out to be quite effective if done right. While today’s RFMs have moved on in many ways, they still incorporate these two key principles. One such RFM is NVIDIA’s Isaac GR00T - that’s the one that turned into a pro gamer.

Nvidia Isaac GR00T N1.5

GR00T was first released in March 2025 and has received a handful of model updates ever since. For this project we chose GR00T N1.5, the newest open-source version at the time. GR00T was trained on a large dataset, consisting mostly of bimanual manipulation tasks. This is the domain where GR00T shines and that it’s usually used for - controlling two robot hands and arms. Coincidentally two hands are all you need to play a video game, so Counter-Strike should not be a problem, right?

In the video below, you can see one of TNG’s GR00T fine-tunes performing a dice sorting task in the real world using a LeRobot SO-101 Robotic Arm. The model runs at ~10Hz, observes the environment around it and smoothly controls the robot arms to perform this somewhat complex task.

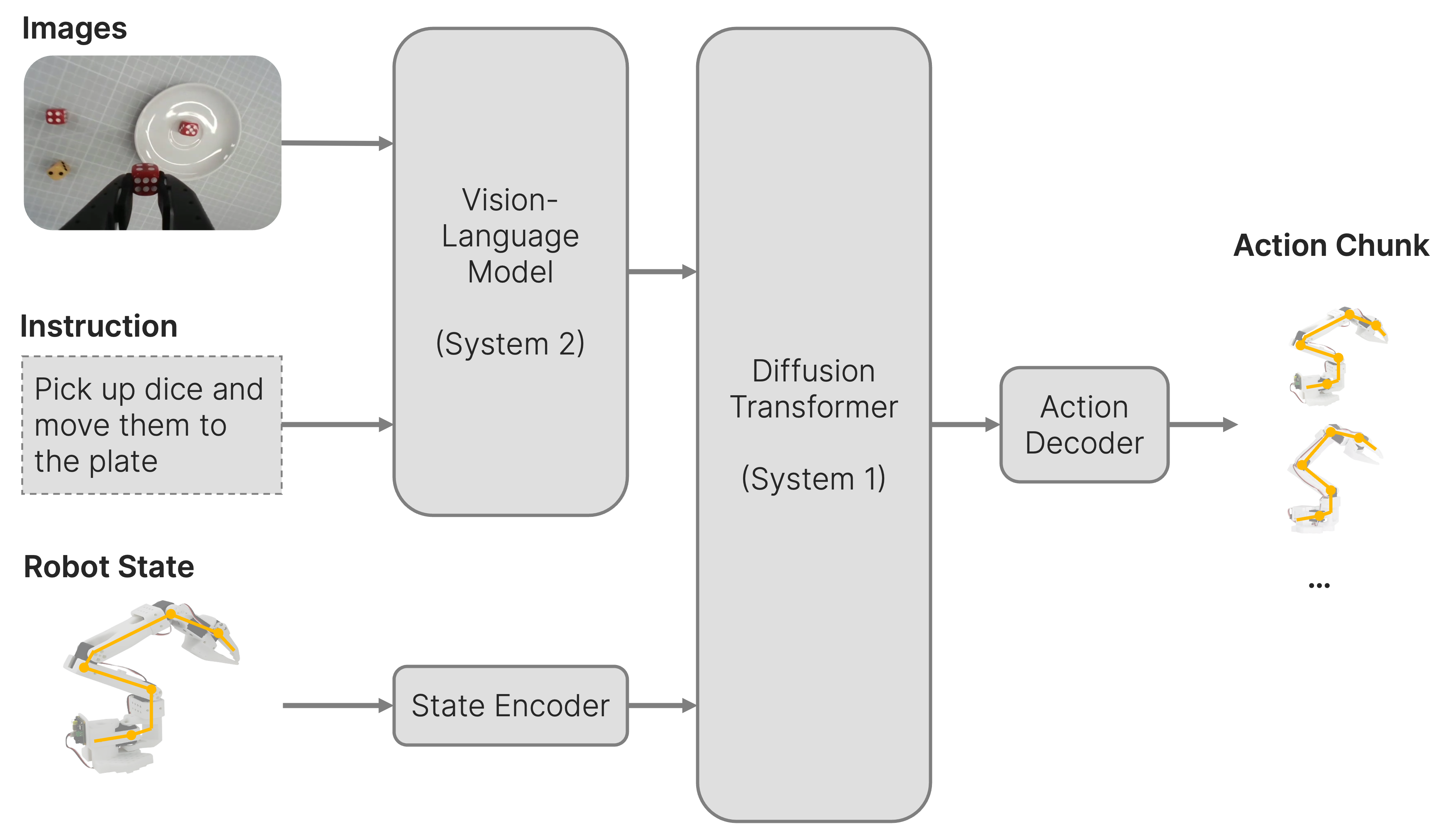

To understand where exactly GR00T gets its broad capabilities from, let’s have a closer look under the hood. Below, you can see a simplified schematic of GR00T N1.5’s architecture.

GR00T is a Deep Learning Model that uses multi-modal input to generate a prediction for future robot movements. It uses three different kinds of inputs:

- Images: These are typically images from one or more cameras on the robot. By default, GR00T doesn’t use temporal context, so it only sees the robot and its surroundings at the current point in time.

- Language Instructions: These are similar to prompts in LLMs - because technically, that’s exactly what they are.

- Robot State (Proprioception): Information about the state of the robot, most often positions of the joints, can also include sensor readings or velocities.

From these inputs, GR00T can predict what actions to take, a so-called action chunk. This is a sequence of movements or joint states that should be performed by the robot over the next period of time.

The GR00T model architecture consists of three major parts:

- The Vision-Language Model (System 2)

- The Diffusion Transformer (System 1)

- State Encoder and Action Decoder

The terms “System 1” and “System 2” correspond to the two modes of thinking proposed by Daniel Kahneman in his book “Thinking, Fast and Slow”.

In the book, System 1 is fast, automatic, and intuitive, operating with little to no effort. This mode of thinking allows us to make quick decisions and judgments based on patterns and experiences.

System 2 on the other hand is slow, deliberate, and conscious, requiring intentional effort. This type of thinking is used for complex problem-solving and analytical tasks where more thought and consideration are necessary. This is a common architecture pattern in RFMs, where System 2 does high level planning and System 1 real-time motion planning.

Vision-Language Model

The first part is the Vision-Language Model (VLM), which is basically just a fancy word for a multimodal LLM that can process images in addition to text.

In GR00T N1.5, this is literally a fine-tune of NVIDIA’s own Eagle 2.5 LLM with 2.1B parameters. That’s pretty small for an LLM, but pretty big for a robotics model. It’s capable of basic reasoning, making it well suited for System 2 style tasks.

To avoid catastrophic forgetting and preserve all of its visual reasoning capabilities, the VLM is frozen during pre-training. One exception is the Vision Encoder - the eyes of the LLM - which can be trained during robotics fine-tuning.

The VLM processes the input images and language instructions and produces a neural representation of the current scene and the task at hand, which is then fed into the next part of the model.

Diffusion Transformer

The Diffusion Transformer (DiT) is somewhat similar to diffusion models that you might know from image generation. Instead of generating images, it generates action chunks via multiple iterations of denoising. It takes the robot state as input and cross-attends on the VLM output to generate the action chunk.

During each inference step, the Diffusion Transformer has to plan a fine-grained motion trajectory, which in that sense corresponds to System 1. This is where the fast and slow thinking analogy ends though: Ironically, both systems are time-synced so they are technically running at the same speed.

Encoder and Decoder

Finally, we have the State Encoder and the Action Decoder, they are the abstraction layers for different kinds of robots, so-called robot embodiments. The State Encoder translates the robot-specific state information to a universal representation that GR00T understands. The Action Decoder performs the reverse mapping and translates a universal action representation to a robot-specific action chunk.

This is necessary to account for the variety of robot embodiments: Robots can have different numbers and configurations of joints, wheels and sensors, that might also need to be controlled in different ways. Think of a humanoid robot vs. a robot arm vs. a vacuum robot. Some might be controlled through absolute end-effector positions, some via relative joint angles, some with high-level control inputs like throttle and steering angle. GR00T solves this problem by using different encoders and decoders for each robot embodiment, while the core of the model, the VLM and the diffusion transformer, can stay the same. This way, GR00T preserves its foundation knowledge while being able to adapt to arbitrary robots.

So to wrap up: GR00T is a powerful model, that can solve complex tasks based on visual input and language prompts and can adapt to pretty much ANY control scheme.

Wait… Any control scheme? Even Counter-Strike?

GR00T for Gaming

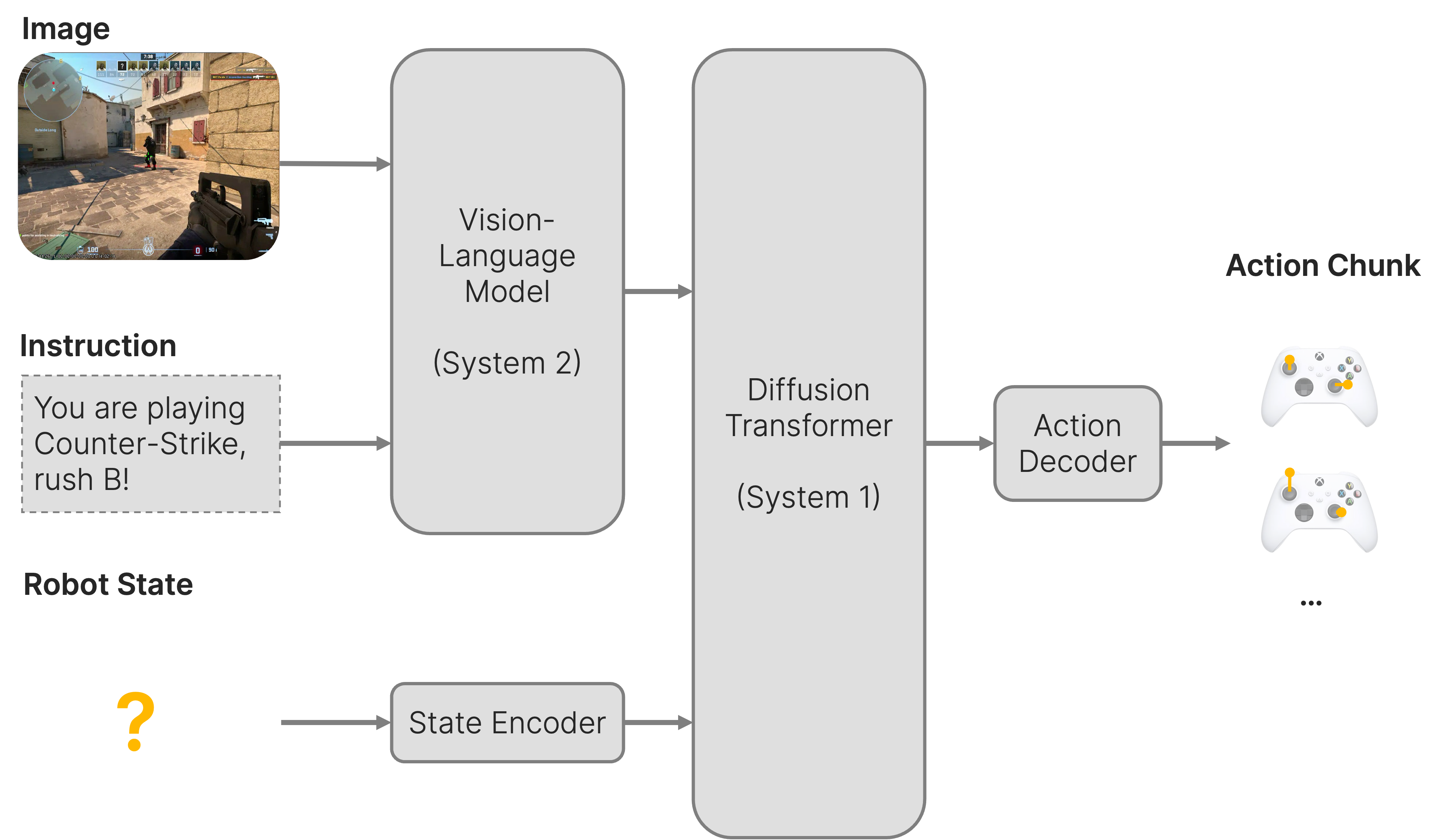

If you think about it for long enough, a video game is just another robot embodiment for GR00T. The game character interacts with the world around it, steered by control inputs and views its surrounding through a camera. It’s actually not that different from controlling a drone or wheeled robot in the real world. So why should GR00T not be able to play video games?

The idea is simple: The camera inputs become a screenshot of the game, the robot state is past actions, and we can set the prompt to “You are playing X. Your objective is to Y”. The action chunk consists of video game control signals. Coincidentally, GR00T works best with state-relative control signals for movement, so when the control signals correspond to changes in position or rotation rather than absolute positions and rotations. This is the same way the player is controlled in Counter-Strike, the movement commands lead to relative changes in position and rotation.

As a control scheme, we chose to go with gamepad input over keyboard and mouse. While this decision would instantly spark a very emotional discussion in most gaming-related sub-reddits, it actually makes a lot of sense for our use case.

Just like most gamers, GR00T has some pretty strong opinions about its control scheme: Like most other neural networks, it prefers continuous and somewhat normalized data. This makes the iconic WASD controls for moving the player somewhat unattractive for us: key presses are binary. You can either stand or walk at full speed, which is not a very intuitive concept for neural networks. Mouse inputs for movement are at least continuous - you can turn at whatever speed you want - but they’re not normalized. There is no upper limit on how much you can turn between two frames, you could do a full 360 if you move your mouse fast enough. This concept is rather hard for GR00T to understand. Ideally the control signals are normalizable to a [0,1] range. Gamepad controls minimize a lot of these issues: movements are performed using the analogue sticks that read continuous and normalizable data by design. With analogue triggers, we can also get [0,1]-continuous values for aiming and shooting, not necessary for Counter-Strike, but helpful for GR00T.

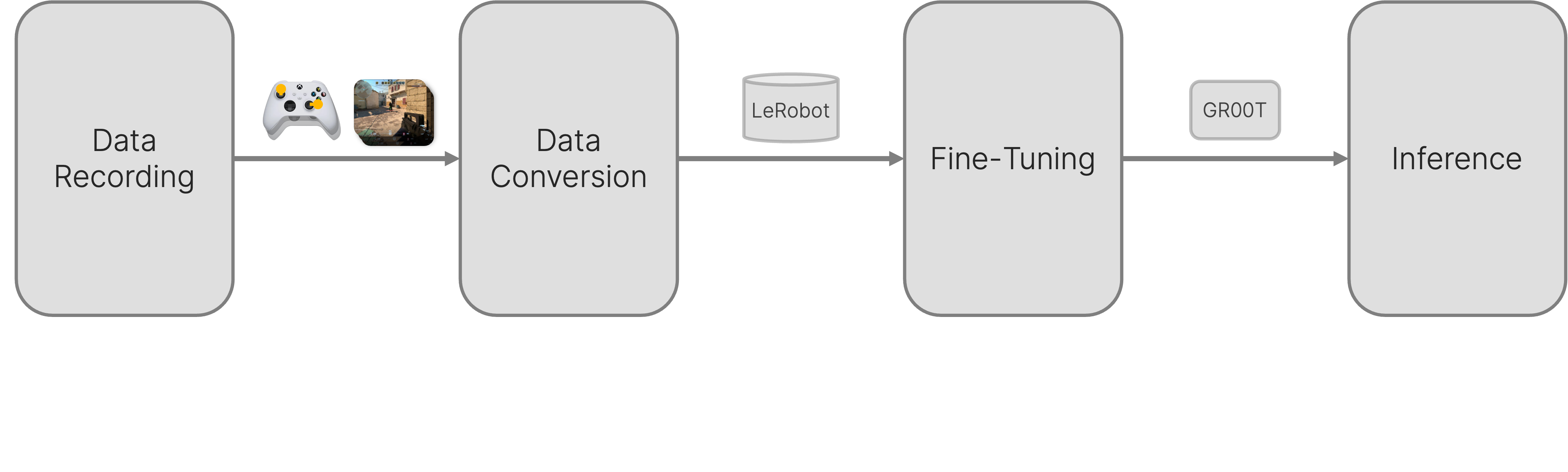

Out of the box, GR00T would not be able to play very well. It has never played video games before, never seen a video game or had a gamepad “in its hands”. So we have to put in some effort to teach GR00T to play Counter-Strike. We approach this challenge in four steps: Collect training data, convert it into the correct format, fine-tune GR00T and deploy the model in Counter-Strike.

Collecting a Dataset

There are many different ways to fine-tune GR00T. The most straight-forward is so-called Imitation Learning where the neural network will learn from expert demonstrations. For your normal robotics use-case, you could record these expert demonstrations in simulation environments or using tele-operation setups.

Below, you can see a typical tele-operation setup for robotic arms: A human can manipulate a second set of robot arms, the so-called leaders, and the motion is transferred to the actual arms, the followers, in real-time.

A dataset containing 20-50 high quality demonstrations is usually enough to learn simple tasks, thanks to GR00T’s strong foundation knowledge. For the dice sorting task above, we recorded 100 complete sequences of sorting the dice.

In Counter-Strike, recording the “expert” demonstrations is as simple as playing the game and recording everything. Sounds like fun, until you remember that you have to play it with a gamepad. Of course Counter-Strike as a PC-only game has no native support for gamepads. On the technical side, this was quickly resolved by Steam Input, a compatibility layer that allows you to monkey-patch gamepad support into any games. On the human side, we had some trouble finding a CS expert who also happened to be proficient with a gamepad - those two things seem to be mutually exclusive - so we selected a console player as our “expert” and switched the game mode to deathmatch to remove most of the game-specific complexity.

For recording the data, we wrote a script that records both the game window and the gamepad inputs in sync at 60 Hz and stores them with timestamps. In a second step, we convert the data to the LeRobot dataset format. This data format is needed for fine-tuning GR00T. Here we inject our prompt for the VLM backbone: “You are playing Counter-Strike Deathmatch. Shoot all Enemies.”, fill the “robot state” with past control signals and compress the video down to 512x512 pixels. While we lose some information in the visual channel here, reducing the resolution is crucial to keep GR00T N1.5 from getting too slow or consuming too much memory. For our setup, we chose the action chunk to be of length 16, so roughly a quarter of a second of gameplay actions at a 60 Hz.

At the time of writing, we have recorded a couple of hours of demonstrations with different “experts” on this setup.

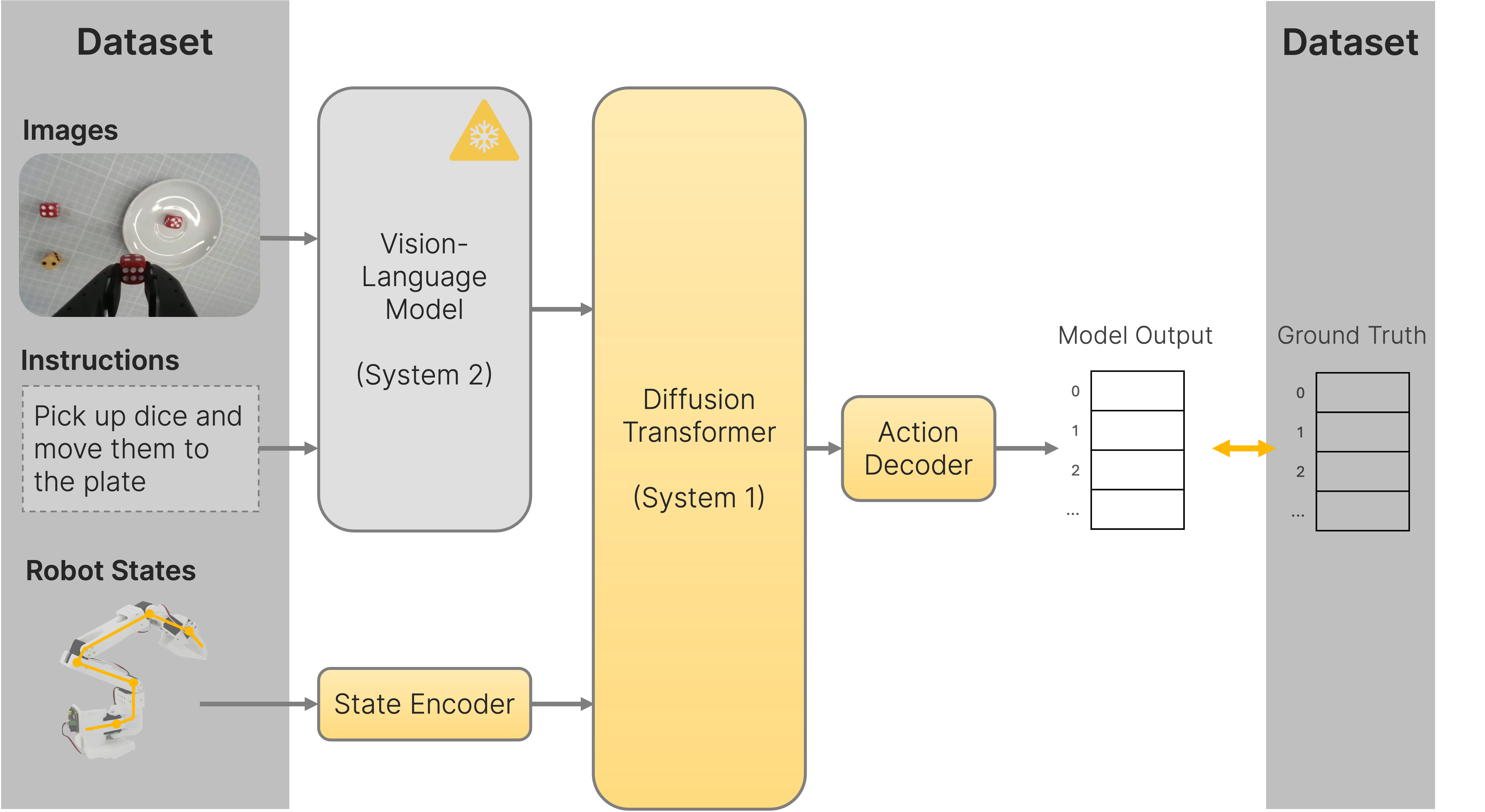

Fine-Tuning via Imitation Learning

The idea behind Imitation Learning is pretty simple: We want GR00T to learn the same behavior that is present in the dataset. So when given an image, prompt and robot state from a random point in time in the dataset, we want GR00T to generate an action chunk that is identical to the actions that were taken in the dataset.

In practice, this can be achieved through simple supervised learning. In each training step, we sample the model inputs from a random timestamp in the dataset, feed them into GR00T, and calculate the loss as the difference between the predicted action chunk and the ground truth action chunk. For the loss function, we stick with flow matching, which is the default for all sorts of diffusion models.

Just like during pre-training, we freeze the VLM and effectively only train the Diffusion Transformer, the Encoder and the Decoder. This way, the VLM stays untouched and all use-case-specific learning happens in the Diffusion Transformer, the State Encoder and the Action Decoder. For simpler tasks, one could also get away with training only the Encoder and Decoder, while keeping the Diffusion Transformer frozen as well. With this, you rely entirely on the foundational capabilities of the pre-trained DiT and just learn the specifics of your robot embodiment, saving a lot of memory and also speeding up training. We decided against it for our use case, as we assumed that a DiT trained almost exclusively on manipulating items with two robot arms would perform very poorly in playing a video game without further training. In hindsight, we’re actually really impressed that the model was able to learn this new concept at all.

We ran our Counter-Strike fine-tune run overnight on one NVIDIA RTX 5090. Due to the relatively high image resolutions during training, we require at least 32GB of VRAM for this. After watching the loss go down for 12 hours, we’re ready to deploy our pro-gamer model: xXx_GR00T_xXx

Running xXx_GR00T_xXx

While fine-tuning requires a lot of resources, inferencing GR00T can be done on rather modest hardware. We, however, chose an NVIDIA RTX 4090 for this. In Ubuntu with the latest drivers and TensorRT optimizations, we can run GR00T at 20Hz. What seems unplayable to gamers, is actually not much of a problem for GR00T. Every 50ms it predicts action chunks for 266ms, so we are way faster than real-time.

To avoid just waiting around in-game until GR00T is finished generating the next action chunk, we employ another optimization called Real-Time Action Chunking (RTC). It allows us to start the GR00T inference while the player is still moving. In very simple terms, RTC informs GR00T on how the player will move during the inference step, allowing GR00T to take this motion into account. To reduce reaction time, we can continuously run GR00T inference, restarting as soon as it finishes with the freshest data. Since we’re doing this every 50ms, we only need to perform the first 3 actions of the action chunk before the next action chunk is ready. With both of these optimizations, we get a model that is both responsive AND smooth.

With xXx_GR00T_xXx running smoothly in real-time, all that remains is to connect it to Counter-Strike. For this, we use the same window capture as for collecting our dataset, and feed the control signals from GR00T through a gamepad emulator. And just like that, GR00T is ready to play Counter-Strike.

Model Revisions

During the development process, we created different versions of xXx_GR00T_xXx. The following chapter details some milestones along the way.

The project started with a one-day hackathon, where we fine-tuned GR00T on the open-source Counter-Strike Deathmatch dataset as a throwaway PoC. While the data quality was not perfect for our specific use-case with awkward frame-rates, resolutions, input modalities and a major version mismatch (CS:GO vs CS2), we were still pleasantly surprised.

xXx_GR00T_xXx V1 was able to navigate de_dust2 - with very choppy motion due to discrete movement commands and no real-time action chunking - and also engage enemies under lab conditions. Not in any way practical, but a lot better than nothing. Good enough at least to show that this idea has potential.

After implementing real-time action chunking and investing in a proper data recording pipeline, we trained xXx_GR00T_xXx V3 (j00nas). For this, we recruited a highly skilled FPS veteran - albeit on keyboard and mouse - and forced him to collect roughly 30 minutes of Deathmatch gameplay on de_dust2 with a gamepad. His pain was our gain: j00nas navigates Dust 2 with ease, peeking corners, engaging enemies, reloading tactically. What he lacks in aim, he makes up for in tactical finesse, giving him a solid score of 90 against bots and an 8/20 KD ratio.

From the limited data, j00nas had also gained a good understanding of different weapons, their properties and manual of arms. Using the scope of sniper rifles, holding down the trigger on machine guns and taking single, careful shots with pistols. Apart from the bad aim, we found j00nas doing a pretty good job at copying the player-specific behaviour from the training data: Reloading after one shot, occasionally shooting chickens and spamming the “stop recording training data” button at the end of the match.

Finally, we managed to find a console gamer among our ranks that was willing to spend a little over an hour of paid work time “collecting training data” on de_dust2. We trained xXx_GR00T_xXx V6 (t00ni), that - to the surprise of absolutely no-one - was blessed with significantly better aim, scoring a 26/18 KD with 299 points against bots, about half of what the human “expert” console gamer had achieved during training data collection.

Based on these impressive results, we decided to check if xXx_GR00T_xXx was a dust2 deathmatch one-trick pony or if it had built some general knowledge that could generalize on other maps, game modes or even games.

Generalization

During its fine-tuning, xXx_GR00T_xXx had only ever “played” Counter-Strike 2 Deathmatch on Dust 2. Not expecting much, we still wanted to try how well xXx_GR00T_xXx could apply its capabilities to previously unseen scenarios.

The most obvious first step was to try out other maps in CS2. We tried a variety including cs_italy. To our surprise, xXx_GR00T_xXx navigated the previously unseen maps with ease, engaging with enemies, shooting chickens and only sometimes getting caught up on fences and invisible walls. Generally the routes seemed sensible and well planned. Even in scenarios with limited vision or straight up staring at a wall, xXx_GR00T_xXx seemed to make smart navigation choices, hinting to us that it had also learned to read and understand the mini-map.

Impressed by these results, we wanted to push xXx_GR00T_xXx to its limits - by playing a completely different game… well almost.

We stuck with the genre of First-Person Shooters to give xXx_GR00T_xXx a fair chance and chose Call of Duty: Modern Warfare II (2009) as our torture test. The game features completely novel mechanics: sprinting, aiming down sights, no chickens as well as different guns, different graphics, different objectives. After setting up our pipeline, we sent xXx_GR00T_xXx off to the battle with no further fine-tuning, adjustment to the prompt or briefing.

We watched xXx_GR00T_xXx get absolutely slaughtered in a regular mission - it was overwhelmed by the bloody screen - and then decided to go for something simpler: The Pit, a training level with no enemies, just harmless paper targets and all the time in the world. Visibly confused, xXx_GR00T_xXx made it out of the starting gate, walking - not sprinting - aimlessly around in the court before eventually opening fire on the first target from the hip. Not sure of what to do, xXx_GR00T_xXx wandered the first stage of the map a while longer before eventually shooting the second target after around 2 minutes. All in all, not the best performance in this level, but also much better than random actions, and very impressive for a model that had only ever seen “real humans” instead of paper targets in an hour of CS2 Deathmatch on Dust 2.

While obviously xXx_GR00T_xXx isn’t a miraculous jack of all trades when it comes to gaming, we are still quite impressed by just how well it handled novel, “out-of-distribution” scenarios.

Traditionally in Computer Vision, one would take all kinds of careful measures to add variance to the training data, avoid over-fitting and out-of-distribution scenarios during inference. We took none of these measures, shoved around an hour of raw gameplay into GR00T and ended up with a model that not only understands Dust 2 deathmatch but also does a decent job on completely different maps and games. Even just the fact that a model that was specifically designed to handle two robotic arms can be rewired to play Counter-Strike overnight and seemingly recycle its foundational knowledge for this is still quite remarkable.

Ongoing Work

While xXx_GR00T_xXx is showing some first impressive results, it’s still lacking some crucial capabilities compared to human gamers. Our current work focuses on closing the gap by giving GR00T some of these capabilities.

Temporal Understanding

By default GR00T views the world on a frame-by-frame basis with no concept of temporal context or memory apart from historic actions from real-time action chunks. This leads to situations where xXx_GR00T_xXx will instantly forget about enemies as soon as they briefly drop out of the line of sight. xXx_GR00T_xXx frequently ends heated close-quarters battles by just walking off like nothing ever happened when briefly losing a line of sight or zooms in with scoped weapons only to completely forget why and zoom out again. While hilarious, the overall gaming performance could definitely benefit from being able to understand the temporal context, also for tracking objects in motion as well as planning and remembering long-term strategies.

Out of the box, GR00T implements a “temporal horizon” for observations that allows us to supply multiple past camera images, actions and robot states. While this sounds like a promising way to add temporal context it turned out to be unpractical, leading to prohibitively large compute cost and memory usage. This is because the number of output tokens generated by Eagle increases linearly with the number of input images, increasing the size of the DiT cross attention quadratically. Combined with the already high screen resolution of xXx_GR00T_xXx, even 3 frames of history ended up overwhelming our RTX 4090 workstations during inference and also our RTX Pro 6000 Server during fine-tuning.

Instead of using the built-in features of GR00T N1.5, we are experimenting with different approaches like providing a history of frames at a lower resolution to keep the compute and memory overhead small. A first PoC looked promising and we are currently developing and testing different versions to figure out the remaining nitty gritty details.

Audio Inputs

Another thing that xXx_GR00T_xXx doesn’t have is a proper audio setup. Out of the box, GR00T perceives the world through visual cues only, however in games like Counter-Strike, being able to hear the world around you can give a significant competitive advantage. Reacting to distant gunshots or nearby footsteps could make the difference between kill or death.

Hacking audio into GR00T is anything but trivial. In an ideal solution, we would want Eagle to be able to perceive both image and audio inputs. GR00T ships with an image encoder that has been specifically fine-tuned in conjunction with Eagle to generate tokens that Eagle can understand. While there are some audio encoders out there that can produce tokens, we would have to do a full fine-tune of Eagle to make them work together. And that’s just not feasible.

Instead, we decided to take a shortcut. Our approach is to reuse the image encoder in an unintuitive, but surprisingly common way: Turn the audio into an image and feed that into the image encoder. This is actually quite common practice in many other domains like music generation. Riffusion, for example, generates music with fine-tuned image generation models. These models were trained to generate so-called mel spectrograms, a visual representation of songs, that can be converted back into audio for listening.

We follow a similar strategy: Convert one-second chunks of game audio into spectrograms and feed them into a second instance of the vision encoder. Since spectrograms are a rather unintuitive type of visual information to the untrained eye, we unfreeze the vision encoder during fine-tuning to allow adaptation to the audio domain.

Outlook

xXx_GR00T_xXx is just the beginning. Throughout the development process, we’ve observed many interesting and promising characteristics of using RFMs in game environments with imitation learning.

With j00nas and t00ni, we saw how RFMs can mimic the personal play-styles observed in the training data. Thanks to imitation learning, learning new play-styles is a matter of recording representative data - instead of defining the desired behaviour in code. We see huge potential to leverage this approach to enable a new level of realistic bot personas in future video games, customized for each game character and controlled via the prompt. We will continue training different xXx_GR00T_xXx personas to fill different roles in a competitive 5-player Counter-Strike team.

Another interesting area for future work is towards gaming foundation models, so models that can play arbitrary, even previously unseen games. So far, we’ve basically overfitted xXx_GR00T_xXx on Counter-Strike, other games technically work thanks to GR00T’s strong world knowledge, but obviously, the performance is far from ideal. By training one model on a massive dataset of many different video games, we could potentially create a gaming foundation model, that can understand and play new games based on the information in the prompt. This would of course also apply to unreleased games, opening up new possibilities for automated play-testing in games development.

In the process of developing xXx_GR00T_xXx, we built reusable pipelines for data recording, fine-tuning and inferencing in video games. Through the use of a generic and universal interface - video inputs and gamepad controls - we can quickly prototype new domains, even outside of gaming. In the last months, we’ve applied the same pipeline to train a model for facility management with a quadruped robot, evaluate autonomous driving models in GTA V and testing autonomous cargo handling in a logistics simulator.